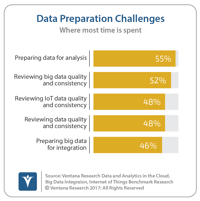

Many organizations continue to struggle with preparing data for use in operational and analytical processes. We see these issues reported in our Data and Analytics in the Cloud benchmark research, where 55 percent of organizations identify data preparation as the most time-consuming task in their analytical processes. Similarly, in our Next-Generation Predictive Analytics research, 62 percent of companies report that they’re unsatisfied because data needed for access or integration is not...

Read More

Topics:

Analytics,

Collaboration,

Data Preparation,

Datawatch

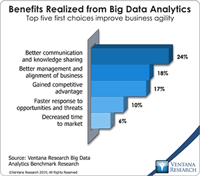

Using information technology to make data useful is as old as the Information Age. The difference today is that the volume and variety of available data has grown enormously. Big data gets almost all of the attention, but there’s also cryptic data. Both are difficult to harness using basic tools and require new technology to help organizations glean actionable information from the large and chaotic mass of data. “Big data” refers to extremely large data sets that may be analyzed computationally...

Read More

Topics:

Big Data,

Data Science,

Planning,

Predictive Analytics,

Sales Performance,

Social Media,

Supply Chain Performance,

FP&A,

Human Capital,

Marketing,

Office of Finance,

Operational Performance Management (OPM),

Budgeting,

Connotate,

cryptic,

equity research,

Finance Analytics,

Kofax,

Statistics,

Operational Performance,

Analytics,

Business Analytics,

Business Performance,

Financial Performance,

Business Performance Management (BPM),

Datawatch,

Financial Performance Management (FPM),

Kapow,

Sales Performance Management (SPM)

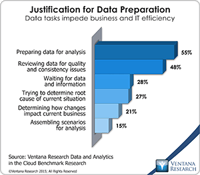

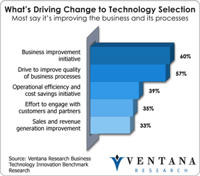

The need for businesses to process and analyze data has grown in intensity along with the volumes of data they are amassing. Our benchmark research consistently shows that preparing data is the most widespread impediment to analytic and operational efficiency. In our recent research on data and analytics in the cloud, more than half (55%) of organizations said that preparing data for analysis is a major impediment, followed by other preparatory tasks: reviewing data for quality and consistency...

Read More

Topics:

Big Data,

Sales Performance,

Supply Chain Performance,

Human Capital,

Marketing,

Monarch,

Operational Performance Management (OPM),

Customer Performance,

Business Analytics,

Business Intelligence,

Business Performance,

Data Preparation,

Financial Performance,

Governance, Risk & Compliance (GRC),

Information Management,

Uncategorized,

Business Performance Management (BPM),

Datawatch,

Information Optimization,

Risk & Compliance (GRC)

Big data has become a big deal as the technology industry has invested tens of billions of dollars to create the next generation of databases and data processing. After the accompanying flood of new categories and marketing terminology from vendors, most in the IT community are now beginning to understand the potential of big data. Ventana Research thoroughly covered the evolving state of the big data and information optimization sector in 2014 and will continue this research in 2015 and...

Read More

Topics:

Big Data,

MapR,

Predictive Analytics,

Sales Performance,

SAP,

Supply Chain Performance,

Human Capital,

Marketing,

Mulesoft,

Paxata,

SnapLogic,

Splunk,

Customer Performance,

Operational Performance,

Business Analytics,

Business Intelligence,

Business Performance,

Cloud Computing,

Cloudera,

Financial Performance,

Hortonworks,

IBM,

Informatica,

Information Management,

Operational Intelligence,

Oracle,

Datawatch,

Dell Boomi,

Information Optimization,

Savi,

Sumo Logic,

Tamr,

Trifacta,

Strata+Hadoop

Read More

Topics:

Big Data,

Pentaho,

Predictive Analytics,

Sales Performance,

Supply Chain Performance,

IT Performance,

Operational Performance,

Analytics,

Business Analytics,

Business Intelligence,

Business Performance,

Cloud Computing,

Customer & Contact Center,

Financial Performance,

Information Applications,

Information Management,

Location Intelligence,

Operational Intelligence,

Workforce Performance,

Datawatch

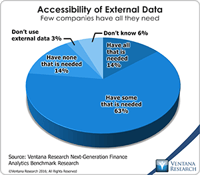

Our recently released benchmark research on information optimization shows that 97 percent of organizations find it important or very important to make information available to the business and customers, yet only 25 percent are satisfied with the technology they use to provide that access. This wide gap between importance and satisfaction reflects the complexity of preparing and presenting information in a world where users need to access many forms of data that exist across distributed...

Read More

Topics:

Big Data,

IT Performance,

Analytics,

Business Analytics,

Business Collaboration,

Business Intelligence,

Business Performance,

Cloud Computing,

Customer & Contact Center,

Data Preparation,

Information Applications,

Information Management,

Data Discovery,

Datawatch,

Information Optimization

In the realm of technology that matters for business and IT, our firm as part of our responsibility continually assesses the latest technology and how it can impact organizations’ efficiency and effectiveness. Our benchmark research in technology innovation found that 87% of participants indicated the importance of increasing the organization’s value through technology innovation. Every year we take our knowledge from research and technology briefings to focus on our Technology Innovation Awards

Read More

Topics:

Big Data,

Datameer,

Mobile,

Sales,

Sales Performance,

Social Media,

Supply Chain Performance,

Sustainability,

Customer,

ESRI,

Globoforce,

GRC,

HCM,

Kronos,

Kyriba,

Location Analytics,

Marketing,

NetBase,

Office of Finance,

Overall Operational Leadership,

Peoplefluent,

Planview,

SQLstream,

VMWare,

VPI,

IT Analytics & Performance,

IT Performance,

Operational Performance,

Analytics,

Business Analytics,

Business Collaboration,

Business Intelligence,

Business Mobility,

Business Performance,

CIO,

Cloud Computing,

Collaboration,

Customer & Contact Center,

Financial Performance,

Governance, Risk & Compliance (GRC),

Hortonworks,

IBM,

Informatica,

Information Applications,

Information Builders,

Information Management,

Information Technology,

KXEN,

Location Intelligence,

Operational Intelligence,

Oracle,

Workforce Performance,

Contact Center,

Datawatch,

Financial Management,

Information Optimization,

Johnson Controls Panoptix,

Roambi,

Service & Supply Chain,

Upstream Works,

Vertex,

Xactly

Business analytics can help organizations use data to find insights that lead to new opportunities and address issues unrecognized before. One player in this market is Datawatch, known for its tools for information optimization and harvesting value from big data including content and documents. I assessed the company earlier this year, and recently our firm recognized its customers’ achievements with 2013 Ventana Research Leadership Awards for Information Optimization with Phelps County...

Read More

Topics:

Big Data,

Sales Performance,

SAP,

Supply Chain Performance,

GRC,

Office of Finance,

Panopticon,

Operational Performance,

Analytics,

Business Intelligence,

Business Performance,

Customer & Contact Center,

Financial Performance,

Information Applications,

Information Management,

Operational Intelligence,

CEP,

Datawatch,

Discovery,

Information Optimization,

SAP HANA

When organizations need to optimize their business processes and improve operations and decisions, the often speak of having the right information at the right time, but don’t always make that a priority. This information optimization is often thought to be expensive and time-consuming, especially with advent of big data and disparate data sources across cloud and on-premises environments, as I have articulated. Datawatch can help business get to information of any variety or volume at any time...

Read More

Topics:

Big Data,

MapR,

QlikView,

Cloud Computing,

Information Management,

Uncategorized,

Datawatch,

Information Optimization

Read More

Topics:

Sales Performance,

SAP,

Social Media,

Supply Chain Performance,

Peoplefluent,

Planview,

Research,

Splunk,

IT Performance,

Operational Performance,

Business Analytics,

Business Collaboration,

Business Intelligence,

Business Performance,

CIO,

Cloud Computing,

Customer & Contact Center,

Financial Performance,

IBM,

Information Applications,

Information Management,

Location Intelligence,

Operational Intelligence,

Workforce Performance,

Ceridian,

CFO,

CMO,

COO,

Datawatch,

Saba,

Technology