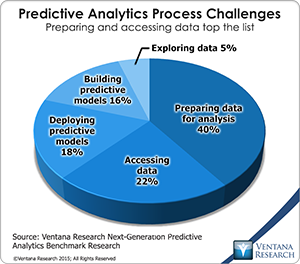

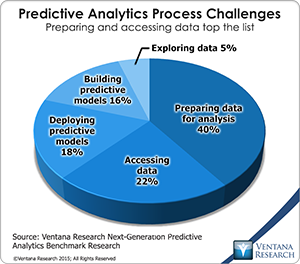

Our research into next-generation predictive analytics shows that along with not having enough skilled resources, which I discussed in my previous analysis,  the inability to readily access and integrate data is a primary reason for dissatisfaction with predictive analytics (in 62% of participating organizations). Furthermore, this area consumes the most time in the predictive analytics process: The research finds that preparing data for analysis (40%) and accessing data (22%) are the parts of the predictive analysis process that create the most challenges for organizations. To allow more time for actual analysis, organizations must work to improve their data-related processes.

the inability to readily access and integrate data is a primary reason for dissatisfaction with predictive analytics (in 62% of participating organizations). Furthermore, this area consumes the most time in the predictive analytics process: The research finds that preparing data for analysis (40%) and accessing data (22%) are the parts of the predictive analysis process that create the most challenges for organizations. To allow more time for actual analysis, organizations must work to improve their data-related processes.

Organizations apply predictive analytics to many categories of information. Our research shows that the most common categories are customer (used by 50%), marketing (44%), product (43%), financial (40%) and sales (38%). Such information often has to be combined from various systems and enriched with information from new sources. Before users can apply predictive analytics to these blended data sets, the information must be put into a common form and represented as a normalized analytic data set. Unlike in data warehouse systems, which provide a single data source with a common format, today data is often located in a variety of systems that have different formats and data models. Much of the current challenge in accessing and integrating data comes from the need to include not only a variety of relational data sources but also less structured forms of data. Data that varies in both structures and sizes is commonly called big data.

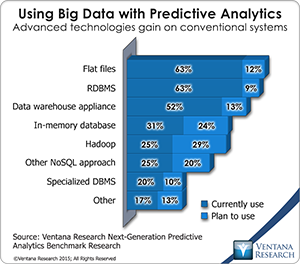

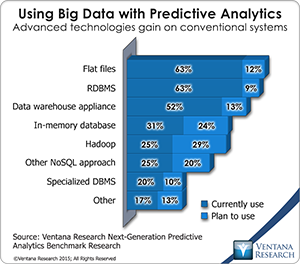

To deal with the challenge of storing and computing big data, organizations planning to use predictive analytics increasingly turn to big data technology. While flat files and relational databases on standard hardware, each cited by almost two-thirds (63%) of participants, are still the most commonly used tools for predictive analytics, more than half (52%) of organizations now use data warehouse appliances for  predictive analytics, and 31 percent use in-memory databases, which the second-highest percentage (24%) plan to adopt in the next 12 to 24 months. Hadoop and NoSQL technologies lag in adoption, currently used by one in four organizations, but in the next 12 to 24 months an additional 29 percent intend to use Hadoop and 20 percent more will use other NoSQL approaches. Furthermore, more than one-quarter (26%) of organizations are evaluating Hadoop for use in predictive analytics, which is the most of any technology.

predictive analytics, and 31 percent use in-memory databases, which the second-highest percentage (24%) plan to adopt in the next 12 to 24 months. Hadoop and NoSQL technologies lag in adoption, currently used by one in four organizations, but in the next 12 to 24 months an additional 29 percent intend to use Hadoop and 20 percent more will use other NoSQL approaches. Furthermore, more than one-quarter (26%) of organizations are evaluating Hadoop for use in predictive analytics, which is the most of any technology.

Some organizations are considering moving from on-premises to cloud-based storage of data for predictive analytics; the most common reasons for doing so are to improve accessing data (for 49%) and preparing data for analysis (43%). This trend speaks to the increasing importance of cloud-based data sources as well as cloud-based tools that provide access to many information sources and provide predictive analytics. As organizations accumulate more data and need to apply predictive analytics in a scalable manner, we expect the need to access and use big data and cloud-based systems to increase.

While big data systems can help handle the size and variety of data, they do not of themselves solve the challenges of data access and normalization. This is especially true for organizations that need to blend new data that resides in isolated systems. How to do this is critical for organizations to consider, especially in light of the people using predictive analytic system and their skills. There are three key considerations here. One is the user interface, the most common of which are spreadsheets (used by 48%), graphical workflow modeling tools (44%), integrated development environments (37%) and menu-driven modeling tools (35%). Second is the number of data sources to deal with and which are supported by the system; our research shows that four out of five of organizations need to access and integrate five or more data sources. The third consideration is which analytic languages and libraries to use and which are supported by the system; the research finds that Microsoft Excel, SQL, R, Java and Python are the most widely used for predictive analytics. Considering these three priorities both in terms of the resident skills, processes, current technology, and information sources that need to be accessed are crucial for delivering value to the organization with predictive analytics.

While there has been an exponential increase in data available to use in predictive analytics as well as advances in integration technology, our research shows that data access and preparation are still the most challenging and time-consuming tasks in the predictive analytics process. Although technology for these tasks has improved, complexity of the data has increased through the emergence of different data types, large-scale data and cloud-based data sources. Organizations must pay special attention to how they choose predictive analytics tools that can give easy access to multiple diverse data sources including big data stores and provide capabilities for data blending and provisioning of analytic data sets. Without these capabilities, predictive analytics tools will fall short of expectations.

Regards,

Tony Cosentino

VP and Research Director

the inability to readily access and integrate data is a primary reason for dissatisfaction with predictive analytics (in 62% of participating organizations). Furthermore, this area consumes the most time in the predictive analytics process: The research finds that preparing data for analysis (40%) and accessing data (22%) are the parts of the predictive analysis process that create the most challenges for organizations. To allow more time for actual analysis, organizations must work to improve their data-related processes.

the inability to readily access and integrate data is a primary reason for dissatisfaction with predictive analytics (in 62% of participating organizations). Furthermore, this area consumes the most time in the predictive analytics process: The research finds that preparing data for analysis (40%) and accessing data (22%) are the parts of the predictive analysis process that create the most challenges for organizations. To allow more time for actual analysis, organizations must work to improve their data-related processes. predictive analytics, and 31 percent use in-memory databases, which the second-highest percentage (24%) plan to adopt in the next 12 to 24 months. Hadoop and NoSQL technologies lag in adoption, currently used by one in four organizations, but in the next 12 to 24 months an additional 29 percent intend to use Hadoop and 20 percent more will use other NoSQL approaches. Furthermore, more than one-quarter (26%) of organizations are evaluating Hadoop for use in predictive analytics, which is the most of any technology.

predictive analytics, and 31 percent use in-memory databases, which the second-highest percentage (24%) plan to adopt in the next 12 to 24 months. Hadoop and NoSQL technologies lag in adoption, currently used by one in four organizations, but in the next 12 to 24 months an additional 29 percent intend to use Hadoop and 20 percent more will use other NoSQL approaches. Furthermore, more than one-quarter (26%) of organizations are evaluating Hadoop for use in predictive analytics, which is the most of any technology.